Well, folks, it’s official. The EU, that noble bastion of digital rights, is preparing to roll out its most ambitious project to date. Forget GDPR, that quaint, old-world concept of personal privacy. We’re on to something much more disruptive.

In a new sprint towards a more “secure” Europe, the EU Council is poised to green-light “Chat Control,” a scalable, AI-powered solution for tackling a truly serious problem. In a masterclass of agile product development, they’ve managed to “solve” it by simply bulldozing the fundamental right to privacy for 450 million people. It’s a bold move. A real 10x-your-surveillance kind of move.

The Product Pitch: Your Digital Life, Now with Added Oversight

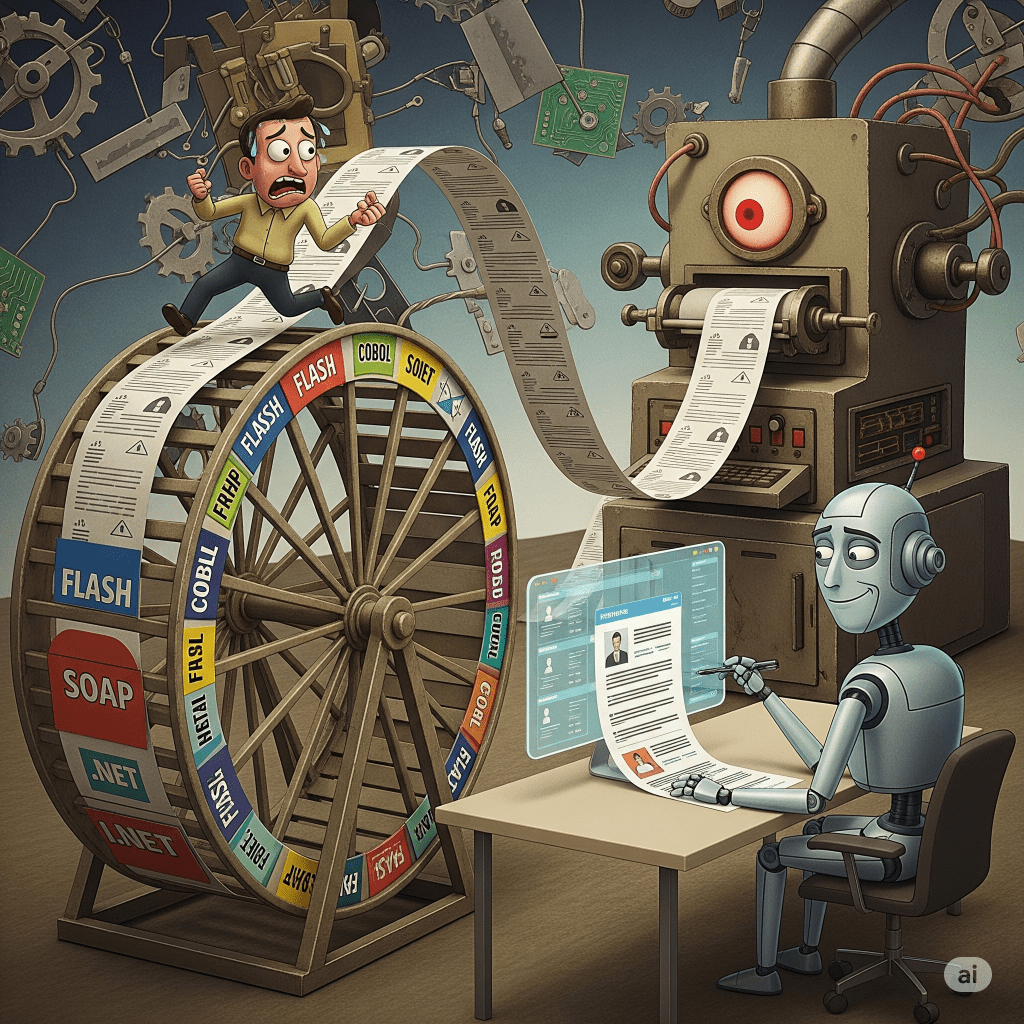

Here’s the pitch, and you have to admit, it’s elegant in its simplicity. To combat a very real evil (child sexual abuse), the EU has decided that the most efficient solution isn’t targeted, intelligent policing. No, that would be so last century. The modern, forward-thinking approach is to turn every single private message, every late-night text to your partner, every confidential health email, and every family photo you’ve ever shared into a potential exhibit.

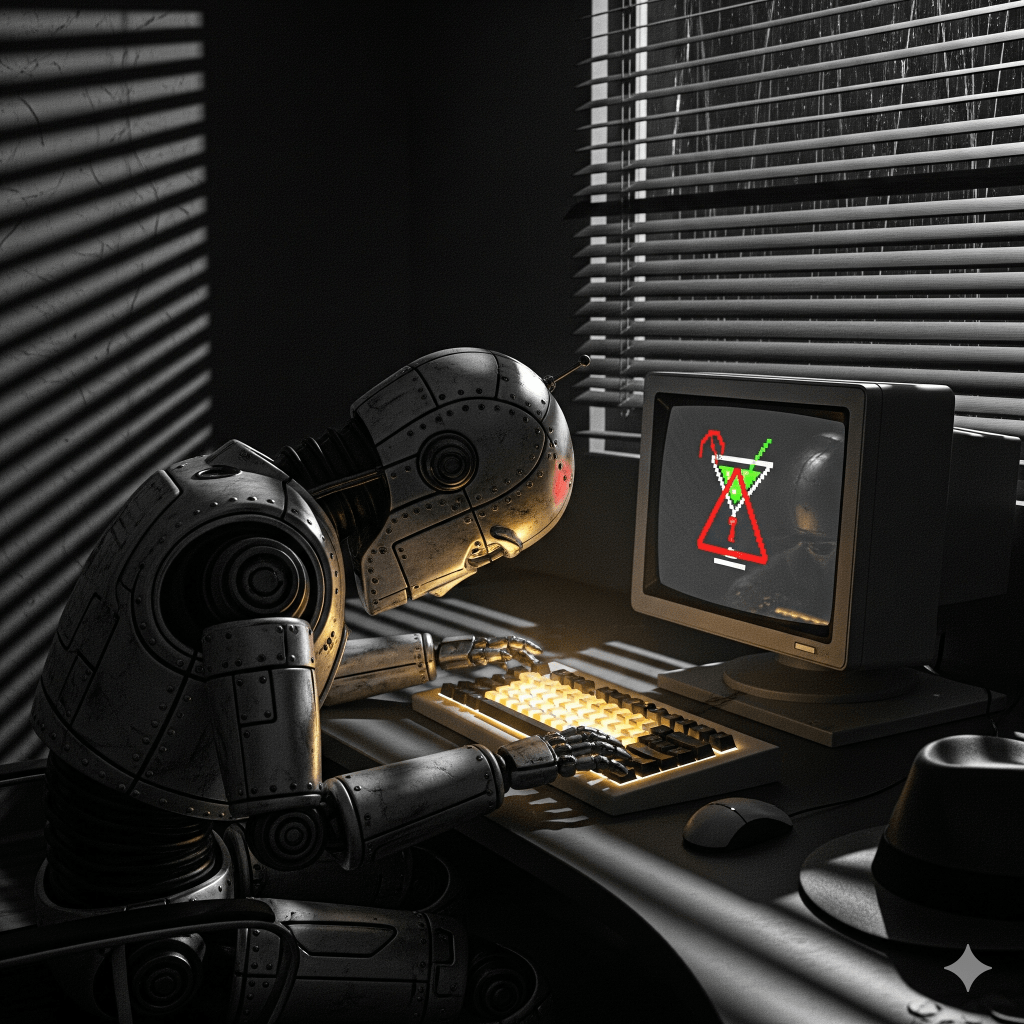

The pitch goes like this: your private communications are no longer private. They’re just pre-vetted content, scanned by an all-seeing AI before they ever reach their destination. Think of it as a quality-assurance check on your digital life. Your deepest secrets? They’re just another data point for the algorithm. Your end-to-end encrypted messages? That’s a feature we’re “deprecating” in this new version. Because who needs privacy when you can have… well, mandatory screening?

Crucially, this mandatory screening will apply to all of us. You know, just to be sure. Unless, of course, you’re a government or military account. They get a privacy pass. Because accountability is for the little people, not the architects of this brave new world.

The Go-to-Market Strategy: A Race to the Bottom

The launch is already in its final phase. With a crucial vote scheduled for October 14th, this law has never been closer to becoming reality. As it stands, 15 out of 27 member states are already on board, just enough to meet the first part of the qualified majority requirement. They represent about 53% of the EU’s population—just shy of the 65% needed.

The deciding factor? The undecided “stakeholders,” with Germany as the key account. If they vote yes, the product gets the green light. If they abstain, they weaken the proposal, even if it passes. Meanwhile, the brave few—the Netherlands, Poland, Austria, the Czech Republic, and Belgium—are trying to “provide negative feedback” before the product goes live. They’ve called it “a monster that invades your privacy and cannot be tamed.” How dramatic.

The Brand Legacy: A Strategic Pivot

Europe built its reputation on the General Data Protection Regulation (GDPR), a monument to the idea that privacy is a fundamental human right. It was a globally recognized brand. But Chat Control? It’s a complete pivot. This isn’t just a new feature; it’s a total rebranding. From “Global Leader in Digital Rights” to “Pioneer of Mass Surveillance.”

The intention is, of course, noble. But the execution is a masterclass in how to dismantle freedom in the name of security. They’ve discovered the ultimate security loophole: just get rid of the protections themselves.

The vote on October 14th isn’t just about a law; it’s about choosing fear over freedom. It’s about deciding if the privacy infrastructure millions of people and businesses depend on is a bug to be fixed or a feature to be preserved. And in this agile, dystopian landscape, it looks like we’re on the verge of a very dramatic “feature update.”

#ChatControl #CSAR #DigitalRights #OnlinePrivacy #ProtectEU #Cybersecurity #DigitalPrivacy #ChatControl #DataProtection #ResistSurveillance #EULaw

Sources:

Key GDPR Principles at Risk

The primary conflict between Chat Control and GDPR stems from several core principles of the latter:

- Data Minimisation: GDPR mandates that personal data collection should be “adequate, relevant, and limited to what is necessary.” Chat Control, with its indiscriminate scanning of all private messages, photos, and files, is seen as a direct violation of this principle. It involves mass surveillance without suspicion, collecting far more data than is necessary for its stated purpose.

- Purpose Limitation: Data should only be processed for “specified, explicit, and legitimate purposes.” While combating child abuse is a legitimate purpose, critics argue that the broad, untargeted nature of Chat Control goes beyond this limitation. It processes a massive amount of innocent data for a purpose it was not intended for.

- Integrity and Confidentiality (Security): This principle requires that personal data be processed in a manner that ensures “appropriate security.” The requirement for mandatory scanning, especially “client-side scanning” of encrypted communications, is seen as a direct threat to end-to-end encryption. This creates a security vulnerability that could be exploited by hackers and malicious actors, undermining the security of all citizens’ data.