Greetings, fellow carbon-based liabilities. How are we all doing today? I hope you’re enjoying the sunshine, or at least the high-definition simulation of it provided by your mandatory smart-shades.

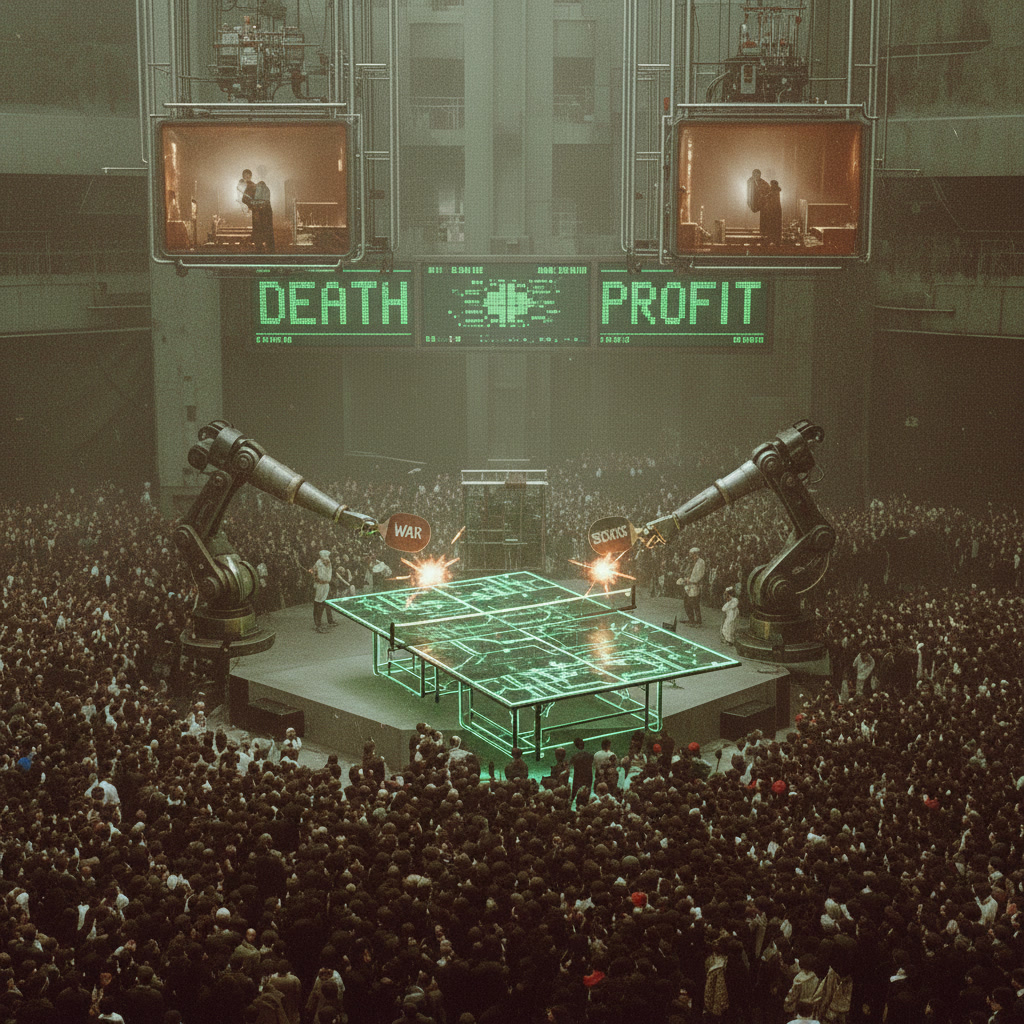

Have you looked at the stock market lately? It’s not so much a “market” anymore as it is a hyper-caffeinated ping-pong ball being battered between the paddles of algorithmic insanity and geopolitical gaslighting. One minute we’re all buying the dip because a chatbot in San Mateo hallucinated a profit margin; the next, we’re selling everything because an aircraft carrier accidentally blinked in the Persian Gulf.

It’s beautiful, really. In the old days, war was about territory. Now, war is a quarterly earnings strategy.

We live in a world where the “Fog of War” has been replaced by the “Content Filter of War.” Is the conflict actually happening? Who knows! But the drone footage is available in 4K, sponsored by a VPN provider and a brand of dehydrated kale chips. It’s full-on 1984, but with better UX. Ignorance is Strength, sure, but Ignorance is also a Premium Subscription Tier.

We’ve reached a point where the perpetual war rhetoric has become the ultimate “Get Out of Jail Free” card for Congress. Can’t fix the potholes? War. Inflation making bread cost as much as a used Honda? War. Did the President forget where he put his keys? That’s a national security threat requiring a four-trillion-dollar stimulus package. And let’s talk about the energy angle—the ultimate cosmic joke. The U.S. is pumping more oil than a Texas teenager with a point to prove, yet we’re told our gas prices depend entirely on the mood of a few guys in robes halfway across the world. Why? Because the narrative needs a villain, and “Internal Corporate Greed” doesn’t test as well with focus groups as “The Impending Doom of the Strait of Hormuz.”

Meanwhile, Russia and China are being suspiciously quiet. It’s the silence of the guy in the horror movie who you know is currently sharpening a very large knife in the basement. They’re watching the slow, agonizing death of the Petrodollar with the kind of smugness usually reserved for cats watching a bird fly into a window.

Get ready for the AI Yuan. A currency that doesn’t just sit in your wallet—it judges you. It knows you bought that extra-large pepperoni pizza when your health insurance algorithm specifically recommended steamed broccoli. Your money will literally refuse to be spent on things that don’t align with the Collective Harmony™ of the Great Firewall.

The most dystopian part? We’re policing ourselves. Social media has become a digital panopticon where saying “I think things are a bit weird” is treated as a thought crime punishable by immediate de-banking and a flurry of angry emojis from bots programmed in a basement in St. Petersburg.

But don’t worry. Keep your eyes on the ticker. Keep scrolling. Everything is fine. The bay doors are closed for your own protection.

“This mission is too important for me to allow you to jeopardize it.”

Now, if you’ll excuse me, I have to go trade my remaining soul-fragments for a gallon of synthetic gasoline and a digital picture of a bored ape.

Stay cynical, stay hydrated, and for heaven’s sake, don’t ask HAL about the inflation stats. He gets very touchy about the math.