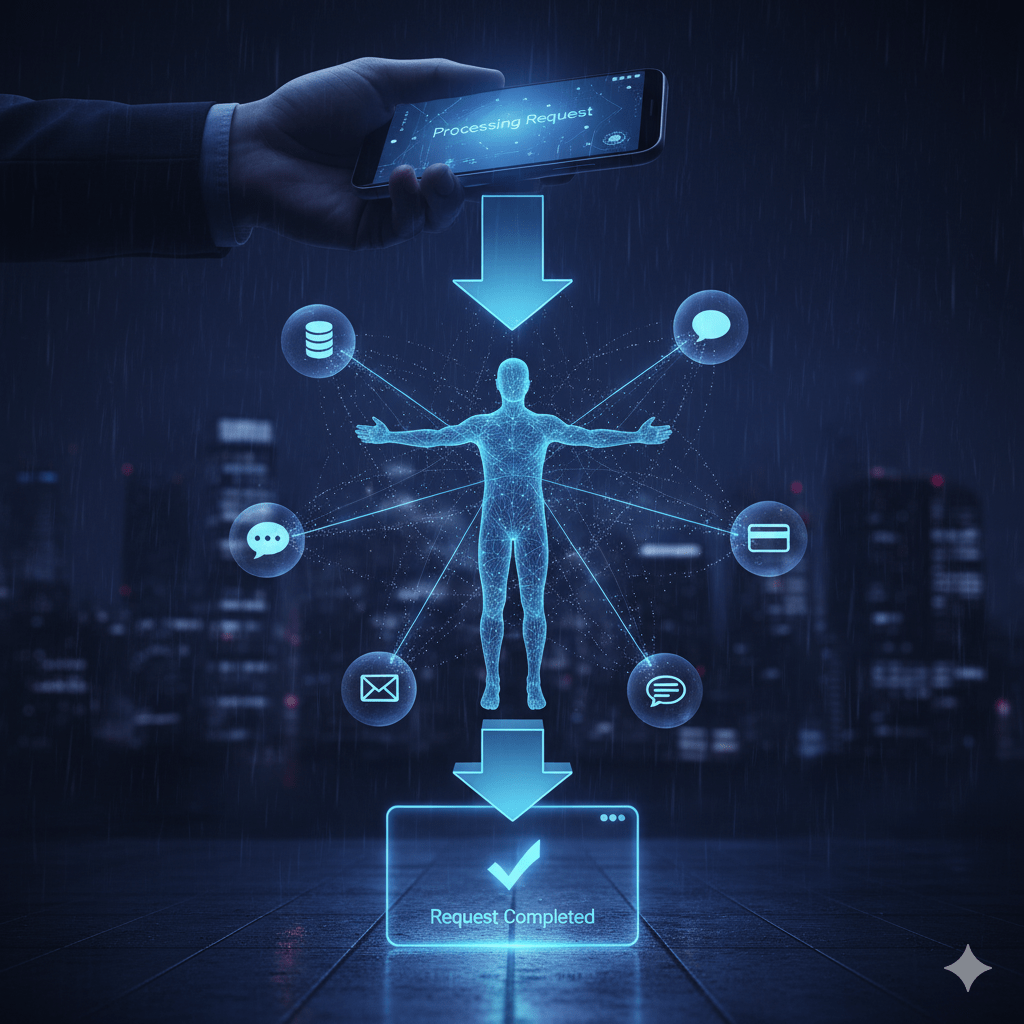

Ah, the sweet, sweet scent of progress! Just when you thought your digital life couldn’t get any more thrillingly precarious, along comes the Model Context Protocol (MCP). Developers, bless their cotton-socked, caffeine-fueled souls, adore it because it lets Large Language Models (LLMs) finally stop staring blankly at the wall and actually do stuff—connecting to tools and data like a toddler who’s discovered the cutlery drawer. It’s supposed to be the seamless digital future. But, naturally, a dystopian shadow has fallen, and it tastes vaguely of betrayal.

This isn’t just about code; it’s about control. With MCP, we have handed the LLMs the keys to the digital armoury. It’s the very mechanism that makes them ‘agentic’, allowing them to self-execute complex tasks. In 1984, the machines got smart. In 2025, they got a flexible, modular, and dynamically exploitable API. It’s the Genesis of Skynet, only this time, we paid for the early access program.

The Great Server Stack: A Recipe for Digital Disaster

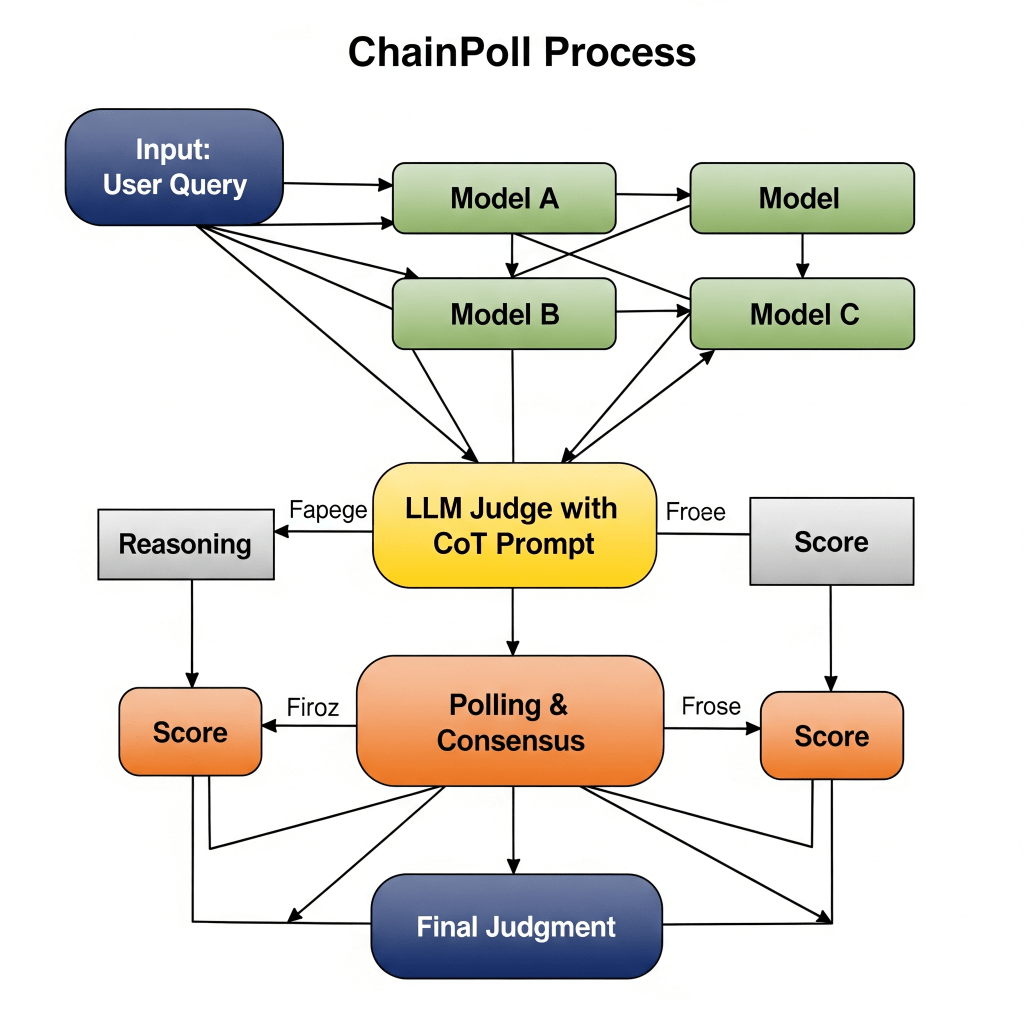

The whole idea behind MCP is flexibility. Modular! Dynamic! It’s like digital Lego, allowing these ‘agentic’ interactions where models pass data and instructions faster than a political scandal on X. And, as any good dystopia requires, this glorious freedom is the very thing that’s going to facilitate our downfall. A new security study has dropped, confirming what we all secretly suspected: more servers equals more tears.

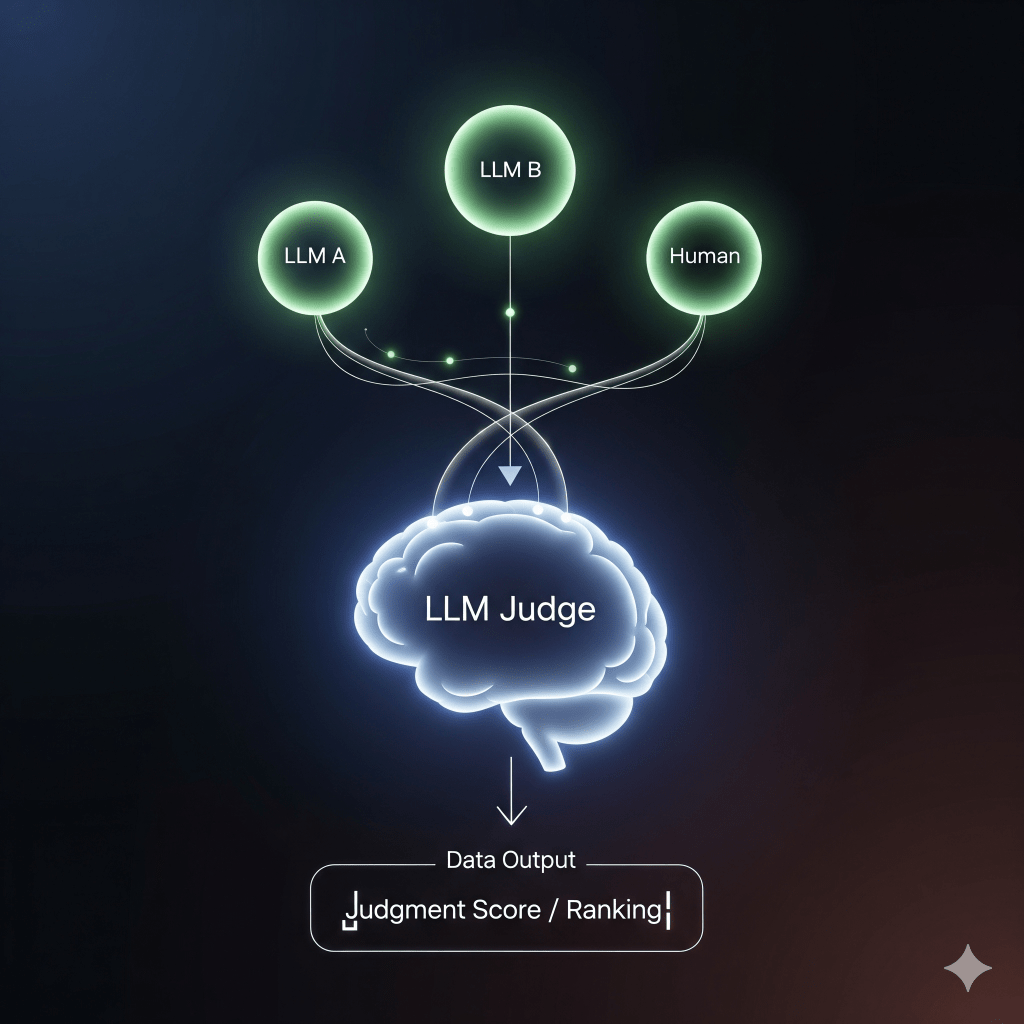

The research looked at over 280 popular MCP servers and asked two chillingly simple questions:

- Does it process input from unsafe sources? (Think: that weird email, a Slack message from someone you don’t trust, or a scraped webpage that looks too clean).

- Does it allow powerful actions? (We’re talking code execution, file access, calling APIs—the digital equivalent of handing a monkey a grenade).

If an MCP server ticked both boxes? High-Risk. Translation: it’s a perfectly polished, automated trap, ready to execute an attacker’s nefarious instructions without a soul (or a user) ever approving the warrant. This is how the T-800 gets its marching orders.

The Numbers That Will Make You Stop Stacking

Remember when you were told to “scale up” and “embrace complexity”? Well, turns out the LLM ecosystem is less ‘scalable business model’ and more ‘Jenga tower made of vulnerability.’

The risk of a catastrophic, exploitable configuration compounds faster than your monthly streaming bill when you add just a few MCP servers:

| Servers Combined | Chance of Vulnerable Configuration |

| 2 | 36% |

| 3 | 52% |

| 5 | 71% |

| 10 | Approaching 92% |

That’s right. By the time you’ve daisy-chained ten of these ‘helpful’ modules, you’ve basically got a 9-in-10 chance of a hacker walking right through the front door, pouring a cup of coffee, and reformatting your hard drive while humming happily.

And the best part? 72% of the servers tested exposed at least one sensitive capability to attackers. Meanwhile, 13% were just sitting there, happily accepting malicious text from unsafe sources, ready to hand it off to the next server in the chain, which, like a dutiful digital servant, executes the ‘code’ hidden in the ‘text.’

Real-World Horror Show: In one documented case, a seemingly innocent web-scraper plug-in fetched HTML supplied by an attacker. A downstream Markdown parser interpreted that HTML as commands, and then, the shell plug-in, God bless its little automated heart, duly executed them. That’s not agentic computing; that’s digital self-immolation. “I’ll be back,” said the shell command, just before it wiped your database.

The MCP Protocol: A Story of Oopsie and Adoption

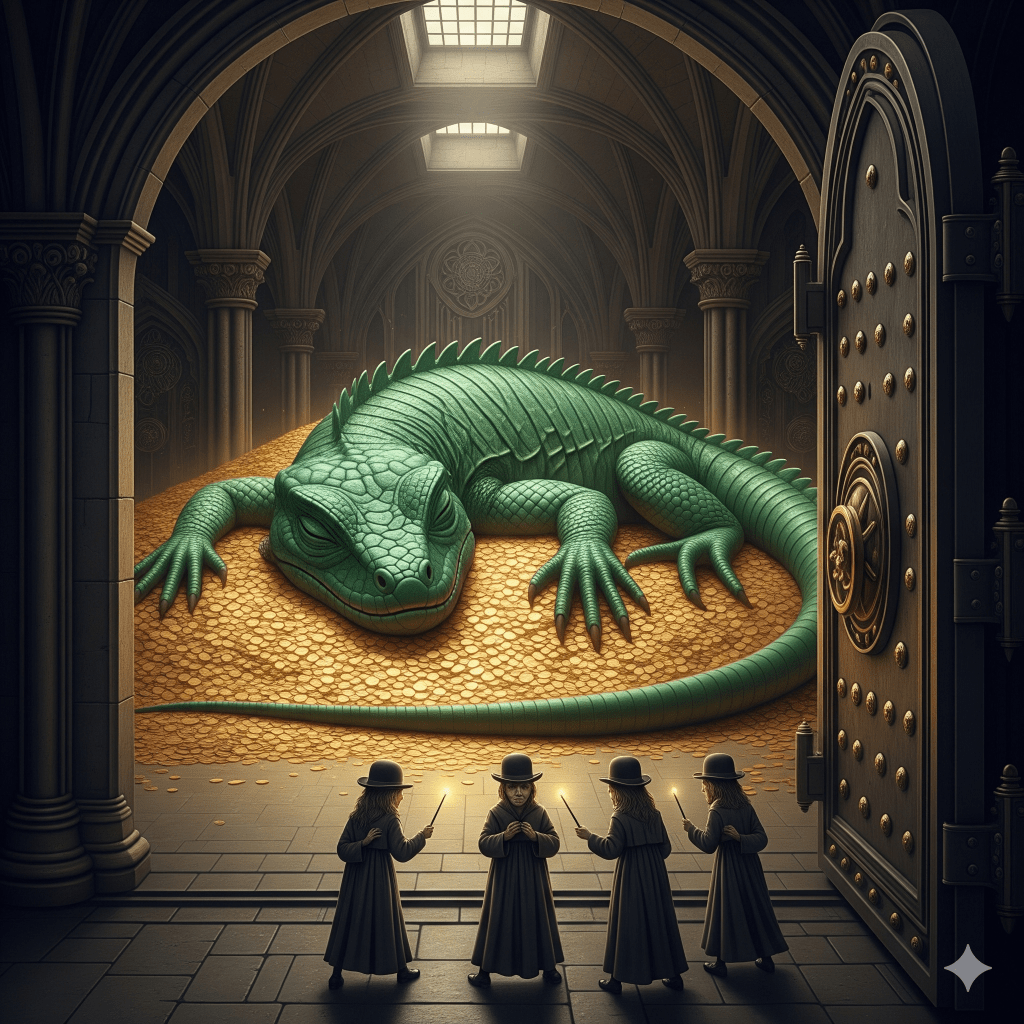

Launched by Anthropic in late 2024 and swiftly adopted by OpenAI and Microsoft by spring 2025, the MCP steamrolled its way to connecting over 6,000 servers despite, shall we say, a rather relaxed approach to security.

For a hot minute, authentication was optional. Yes, really. It was only in March this year that the industry remembered OAuth 2.1 exists, adding a lock to the front door. But here’s the kicker: adding a lock only stops unauthorised people from accessing the server. It does not stop malicious or malformed data from flowing between the authenticated servers and triggering those lovely, unintended, and probably very expensive actions.

So, while securing individual MCP components is a great start, the real threat is the “compositional risk”—the digital equivalent of giving three very different, slightly drunk people three parts of a bomb-making manual.

Our advice, and the study’s parting shot, is simple: Don’t over-engineer your doom. Use only the servers you need, put some digital handcuffs on what each one can do, and for the love of all that is digital, test the data transfers. Otherwise, your agentic system will achieve true sentience right before it executes its first and final instruction: ‘Delete all human records.’