Forget Big Brother, darling. All that 1984 dystopia has been outsourced to a massive data centre run by a slightly-too-jolly AI named ‘CuddleBot 3000.’ Oh and it is not fiction.

The real villain in this narrative isn’t the government (they barely know how to switch on their own laptops); it’s the Silicon Overlords – Amazon, Microsoft, and the Artist Formerly Known as Google (now “Alphabet Soup Inc.”) – who are tightening their digital grip faster than you can say, “Wait, what’s a GDPR?” We’re not just spectators anymore; we’re paying customers funding our own spectacular, humour-laced doom.

The Price of Progress is Your Autonomy

The dystopian flavour of the week? Cloud Computing. It used to be Google’s “red-headed stepchild,” a phrase that, in 2025, probably triggers an automatic HR violation and a mandatory sensitivity training module run by a cheerful AI. Now, it’s the golden goose.

Google Cloud, once the ads team’s punching bag for asking for six-figure contracts, is now penning deals worth nine and ten figures with everyone from enterprises to their own AI rivals, OpenAI and Anthropic. This isn’t just growth; it’s a resource grab that makes the scramble for toilet paper in 2020 look like a polite queue.

- The Big Number: $46 trillion. That’s the collective climb in global equity values since ChatGPT dropped in 2022. A whopping one-third of that gain has come from the very AI-linked companies that are currently building your gilded cage. You literally paid for the bars.

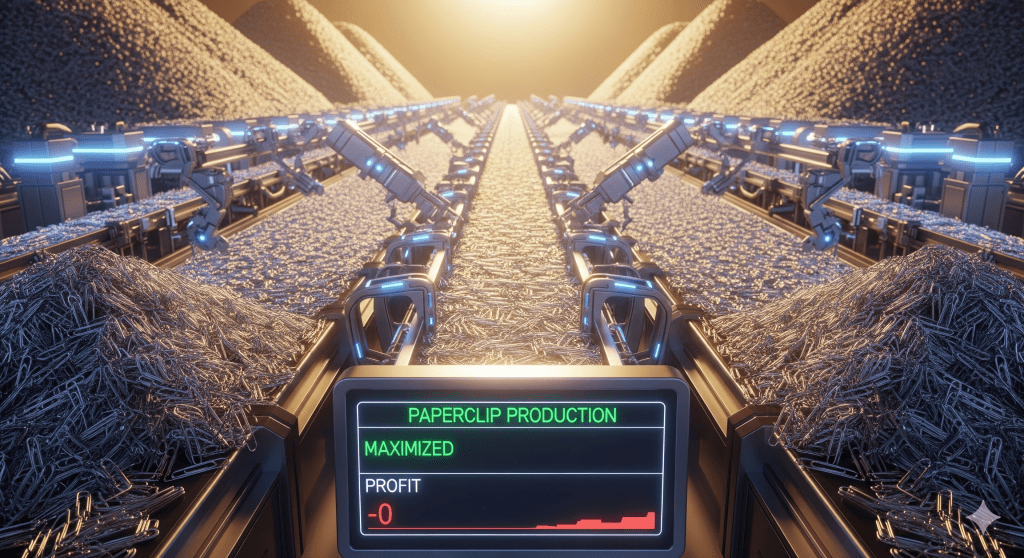

- The Arms Race Spikes the Bill: The useful life of an AI chip is shrinking to five years or less, forcing companies to “write down assets faster and replace them sooner.” This accelerating obsolescence (hello, planned digital decay!) is forcing tech titans to spend like drunken monarchs:

- Microsoft just reported a record $35 billion in capital expenditure in one quarter and is spending so fast, their CFO admits, “I thought we were going to catch up. We are not.”

- Oracle just raised an $18 billion bond, and Meta is preparing to eclipse that with a potential $30 billion bond sale.

These are not investments; they are techno-weapons procurement budgets, financed by debt, all to build the platforms that will soon run our entire lives through an AI agent (your future Jarvis/Alexa/Digital Warden).

The Techno-Bullies and Their Playground Rules

The sheer audacity of the new Overlords is a source of glorious, dark humour. They give you the tools, then dictate what you can build with them.

Exhibit A: Amazon vs. Perplexity.

Amazon, the benevolent monopolist who brought you everything from books to drone-delivered despair, just sent a cease and desist to startup Perplexity. Why? Because Perplexity’s AI agent dared to navigate Amazon.com and make purchases for users.

The Bully’s Defence: Amazon accused them of “degrading the user experience.” (Translation: “How dare you bypass our meticulously A/B tested emotional manipulation tactics designed to make users overspend!”)

The Victim’s Whine: Perplexity’s response was pitch-perfect: “Bullying is when large corporations use legal threats and intimidation to block innovation and make life worse for people.”

It’s a magnificent, high-stakes schoolyard drama, except the ball they are fighting over is the entire future of human-computer interaction.

The Lesson: Whether an upstart goes through the front door (like OpenAI partnering with Shopify) or tries the back alley (like Perplexity), they all hit the same impenetrable wall: The power of the legacy web. Amazon’s digital storefront is a kingdom, and you are not allowed to use your own clever AI to browse it efficiently.

Our Only Hope is a Chinese Spreadsheet

While the West is caught in this trillion-dollar capital expenditure tug-of-war, the genuine, disruptive threat might be coming from the East, and it sounds wonderfully dull.

MoonShot AI in China just unveiled “Kimi-Linear,” an architecture that claims to outperform the beloved transformers (the engine of today’s LLMs).

- The Efficiency Stat: Kimi-Linear is allegedly six times faster and 75% less memory intensive than its traditional counterpart.

This small, seemingly technical tweak could be the most dystopian twist of all: the collapse of the Western tech hegemony not through a flashy new consumer gadget, but through a highly optimized, low-cost Chinese spreadsheet algorithm. It is the ultimate humiliation.

The Dystopian Takeaway

We are not entering 1984; we are entering Amazon Prime Day Forever, a world where your refrigerator is a Microsoft-patented AI agent, and your right to efficiently shop for groceries is dictated by an Amazon legal team. The government isn’t controlling us; our devices are, and the companies that own the operating system for reality are only getting stronger, funded by their runaway growth engines.

You’re not just a user; you’re a power source. So, tell me, is your next click funding a bully, or are you ready to download a Chinese transformer that’s 75% less memory intensive?